A vertex shader is a function that runs in the GPU and manipulates the position of a geometry's vertices real-time. A circle can be transformed into a blob. Adding sin waves makes a plane into an ocean. Splitting triangles one by one makes images disappear.

Vertex shaders are also in charge of changing the "coordinate space" of the vertices. How often have you seen projectionMatrix or modelMatrix or viewMatrix (modelViewMatrix)? These change coordinates between different "coordinate spaces".

Coordinate spaces are like languages. We talk 3D while our screen talks 2D, so we need to translate. Vertex shaders are a big part of the translation process, but they are not the whole story.

Getting started

- Distorting planes based on mouse speed

- Displacing with noise

- Distorting spheres

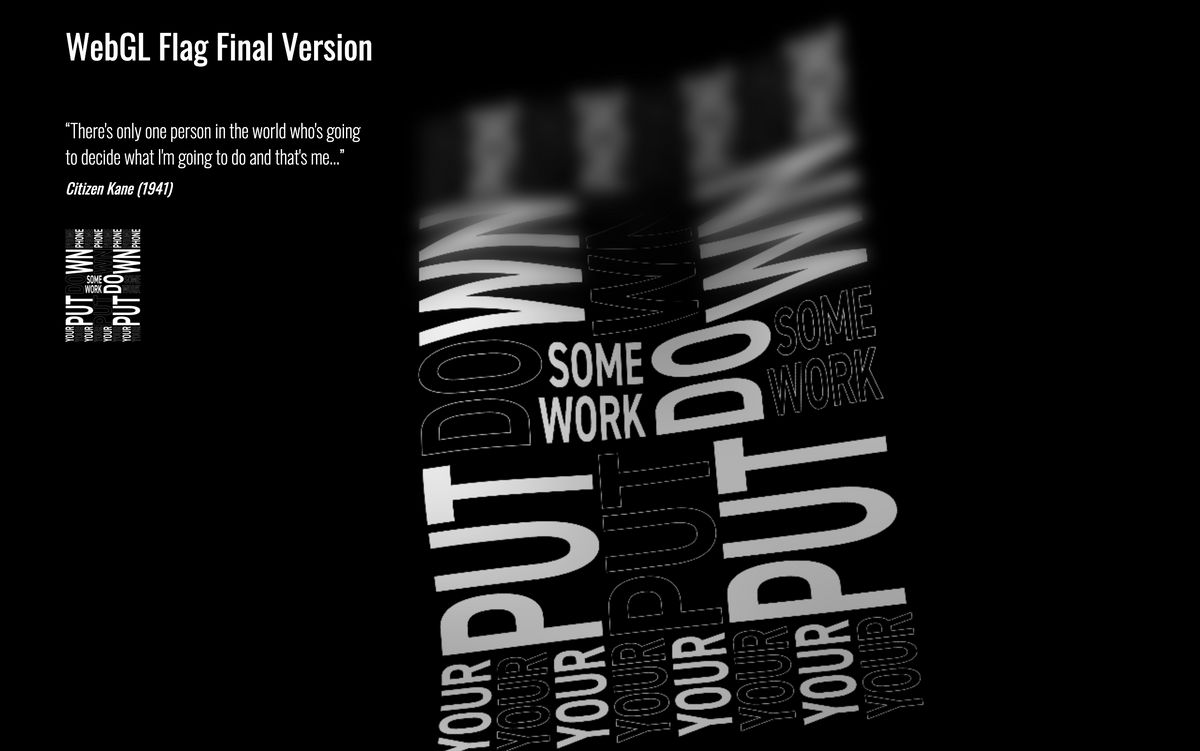

- How to make a waving flag

Plane flag wave distortion

With a sin wave, you can get away with a simple wave distortion. Offset the wave by the UVs, slap time into it, and you got a wave like in this demo and (source).

position.z += sin(uTime + uv.y);However, in this demo, Linchingchester uses perlin-noise and rotates the wave to create a more natural-looking displacement.

This other demo has a similar process.

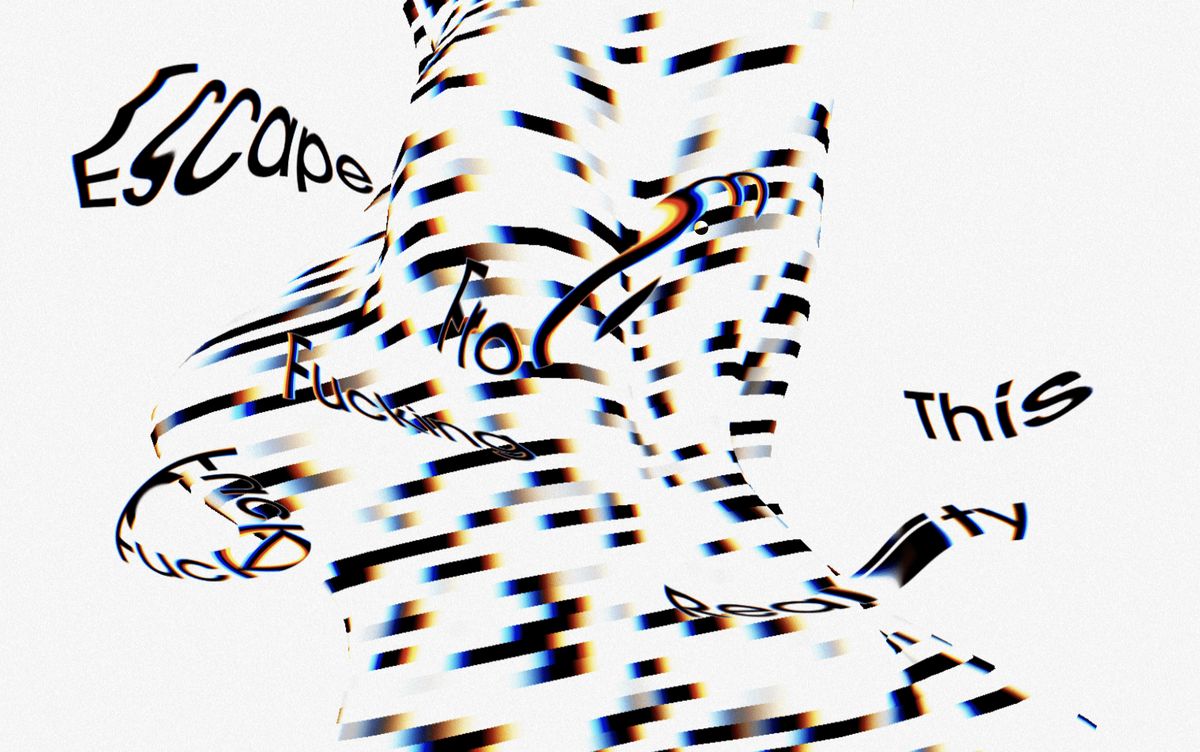

Blob with lines

The displacement of a sphere is quite different than a plane. Unlike the plane above, we can't hand-select a specific coordinate (position.z). The sphere needs to expand in all directions. The simple approach is to scale it:

float displacement = snoise(position) + 1.;

transformedPosition = position * displacement;However, this approach only works on spheres. It wouldn't work for more complicated geometries like a torus, or a model. The better approach, what spite did in this demo, is to expand it using the normals.

transformedPosition = position + normal * displacement;Normals point to the direction the triangle is facing, exactly what we want.

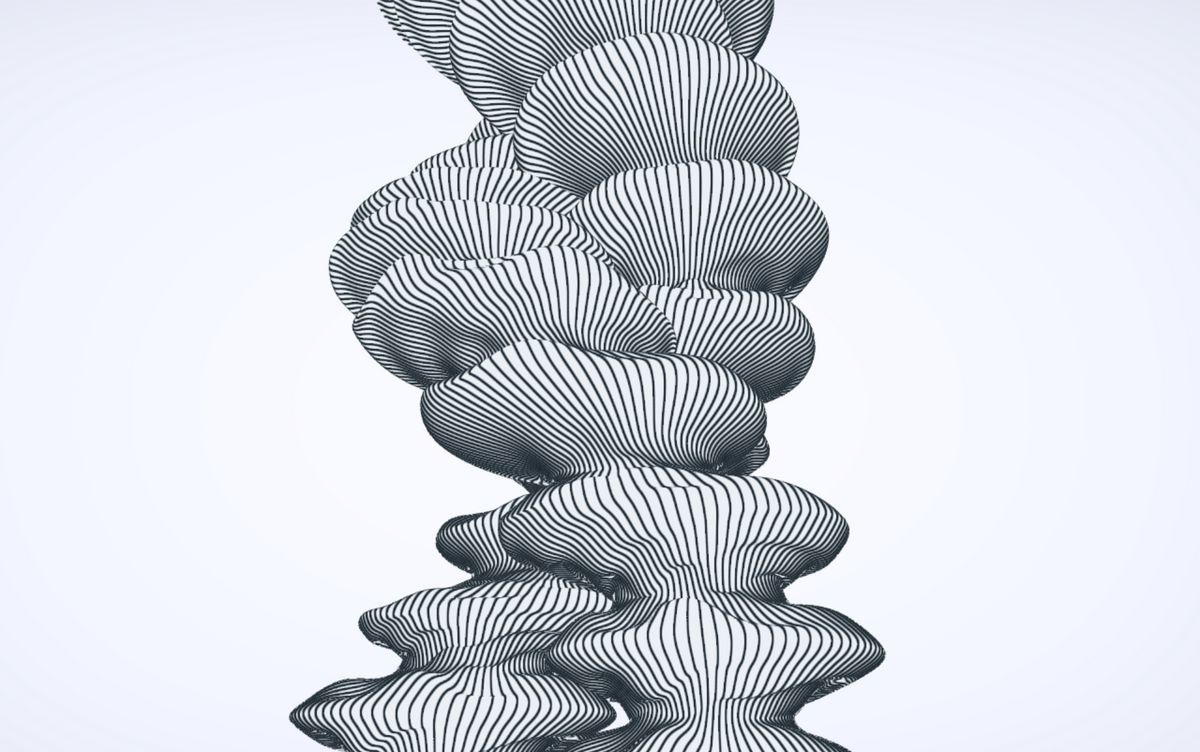

Invisible Torus Distortion

Keita also those the "expand the position based on normal" approach. But the visual approach is unique. Making the tube the same color as the background makes our brain infer the shape by looking at how the lines move along.

Tube distortion

The approach Avin took here is to construct the geometry in the vertex shader. This for more control over its surface at the expense of performance.

Instead of using the position attribute to store the x/y/z position, it stores the angle of each vertex in the position.y. The angle is used calculate the position using sin/cos.

float angle = position.y;

float height = position.x;

calculatedPosition.x = sin(angle)*8.; // 8 is the radius

calculatedPosition.z = cos(angle)*8.;

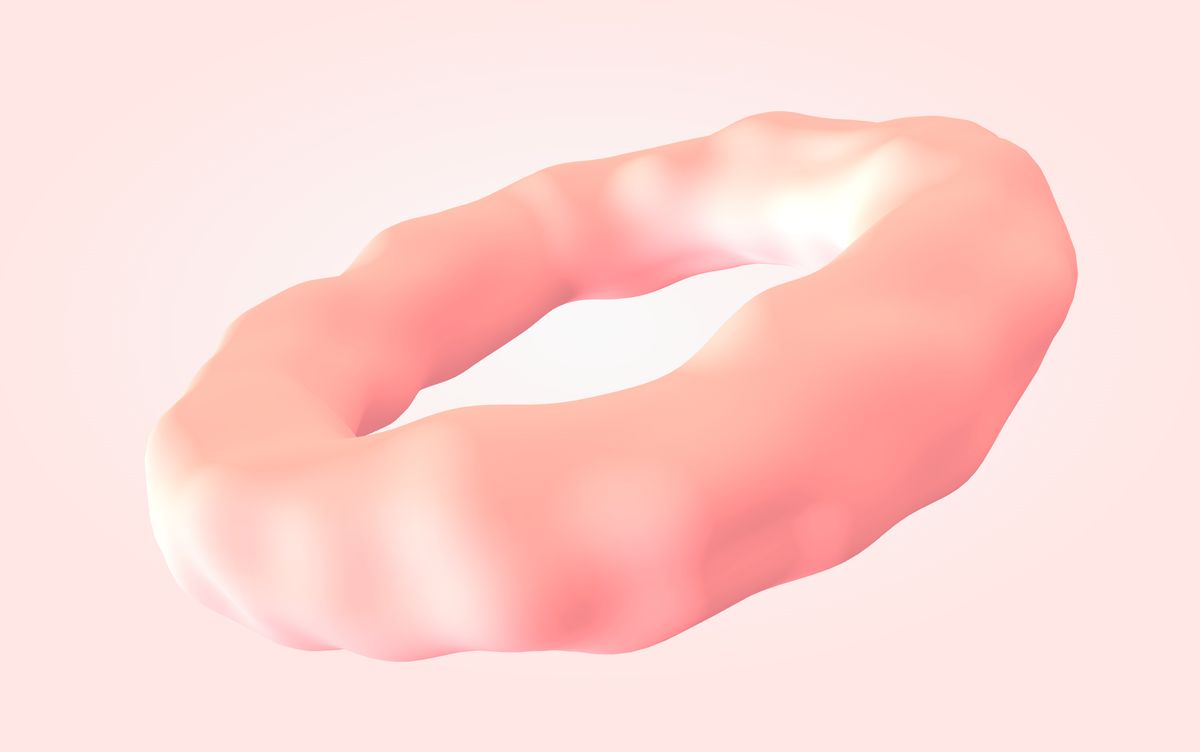

calculatedPosition.y = height;How to over-engineer a torus

I've got a tendency to over-complicate things. In this demo (Source), I deconstructed and re-generated the torus in the vertex shader. The simpler solution would be to just expand by normals. Less math, less headaches.

distorted = transformed + normal * ( radiusVariation);However, I'm delighted to over-engineer things. I learned how to programmatically create toruses. Make stupid stuff, have fun and make demos!